The focal plane of the future LSST Camera. Credit: Jacqueline Orrell/SLAC National Accelerator Laboratory/NSF/DOE/Rubin Obs/AURA

Unraveling the Universe’s Mysteries

CMU researchers harness astrophysical data to change how we think about space

Two projects from Mellon College of Science researchers aim to revolutionize astronomy: The first will create new software platforms that will analyze large astronomical datasets created by the upcoming Legacy Survey of Space and Time, and the second will advance the search for dark matter using data collected from the newly launched James Webb Space Telescope.

“Understanding our universe remains one of the largest open questions in science. The Legacy Survey of Space and Time and James Webb Telescope will provide us with unprecedented data that will help us to better answer these questions,” said Rebecca W. Doerge, Glen de Vries Dean of the Mellon College of Science. “Carnegie Mellon has the unique set of expertise that is needed to lead these projects and create the future of science.”

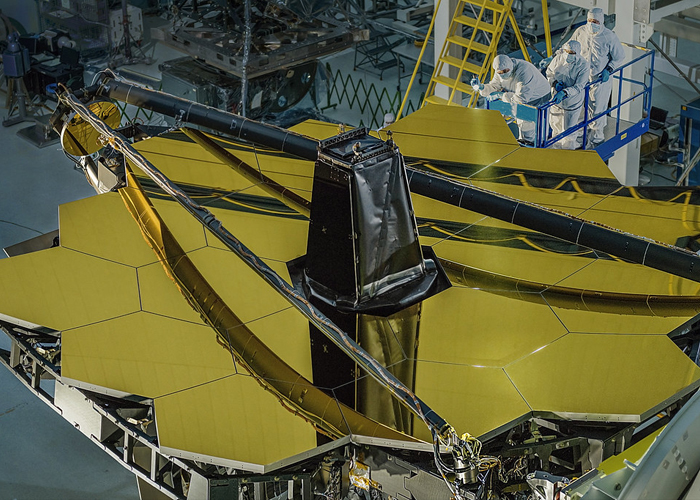

Staff at NASA’s Goddard Space Flight Center work on the James Webb Space Telescope’s primary mirror. Credit: NASA/Chris Gunn

The Vera C. Rubin Observatory in northern Chile will generate large astronomical datasets as it carries out the upcoming Legacy Survey of Space and Time. Credit: Rubin Obs/NSF/AURA

Pioneering Platforms

Carnegie Mellon University and the University of Washington are working together as part of an expansive, multi-year collaboration to create new software platforms to analyze large astronomical datasets generated by the upcoming Legacy Survey of Space and Time (LSST), which will be carried out by the Vera C. Rubin Observatory in northern Chile.

The open-source platforms are part of the new LSST Interdisciplinary Network for Collaboration and Computing (LINCC) and will fundamentally change how scientists use modern computational methods to make sense of large amounts of data.

Researchers from CMU’s Department of Physics in the Mellon College of Science, Department of Statistics and Data Science in the Dietrich College of Humanities and Social Sciences, the Department of Electrical and Computer Engineering in the College of Engineering, and the Pittsburgh Supercomputing Center will contribute to this project.

The Rubin Observatory, a joint initiative of the National Science Foundation and the Department of Energy, will collect and process more than 20 terabytes of data each night — and up to 10 petabytes each year for 10 years — and will build detailed composite images of the southern sky. Astrophysicists estimate the efforts will detect and capture images of an estimated 30 billion stars, galaxies, stellar clusters and asteroids. Each point in the sky will be visited around 1,000 times over the survey’s 10 years, providing researchers with valuable time series data.

Scientists plan to use this unprecedented dataset to address fundamental questions about our universe, such as the formation of our solar system, the course of near-Earth asteroids, the birth and death of stars, the nature of dark matter and dark energy and the universe’s murky early years.

Rachel Mandelbaum, professor of physics and member of the McWilliams Center for Cosmology at Carnegie Mellon, will co-lead the project with Andrew Connolly, a professor of astronomy and director of the eScience Institute at the University of Washington.

“Many of the LSST’s science objectives share common traits and computational challenges,” Mandelbaum said. “If we develop our algorithms and analysis frameworks with forethought, we can use them to enable many of the survey’s core science objectives.”

Together, the teams of programmers and scientists at both universities will create platforms using professional software engineering practices and tools. Specifically, they will create a “cloud-first” system that also supports high-performance computing systems in partnership with the Pittsburgh Supercomputing Center. The tools developed will support a “census of our solar system” that will chart the courses of asteroids; help researchers to understand how the universe changes with time; and build a 3D view of the universe’s history.

“Tools that utilize the power of cloud computing will allow any researcher to search and analyze data at the scale of the LSST, not just speeding up the rate at which we make discoveries but changing the scientific questions that we can ask,” Connolly said.

Detecting Dark Matter

Carnegie Mellon University Associate Professor of Physics Matthew Walker is among the first researchers planning to take advantage of the data from the James Webb Space Telescope.

Walker is principal investigator of a program that is making use of data collected in the Webb telescope’s first year of operation.

“The James Webb Space Telescope will open a new window onto the history of the universe, letting us witness the first stars illuminating the first galaxies,” Walker said. “For my group, the top-level science goal is to learn about the nature of dark matter.”

This theoretical form of matter has never been directly detected but is estimated to comprise most of the matter in the universe. Various theories have arisen about the form dark matter takes, with the currently dominant cold dark matter theory predicting that it exists in clumps called halos with small basic units, as opposed to galaxy-sized agglomerations.

“One of the key predictions we’re trying to test of cold dark matter is the existence of these so-called sub-galactic dark matter halos,” Walker said. “That’s something people have been trying to test for decades.”

These lower-mass collections of dark matter don’t have stars in them, making them difficult to detect and observe. Two tests have been devised so far to seek out sub-galactic dark matter halos, Walker explained, but both have so far produced inconclusive results.

“Here, we’re introducing a new test,” Walker said. “We’re looking for perturbations from these sub-galactic dark matter halos on very fragile gravitational systems.”

The most fragile gravitational systems that can be observed are “wide” binary stars — pairs of stars orbiting each other but separated by more than 1,000 times the distance between the sun and Earth, making the gravitational forces between them very weak.

“That means if a sub-galactic dark matter halo zips through the neighborhood, its gravitational effects can be more than sufficient to destroy this wide binary system,” Walker said.

Walker and his team will examine the data from the James Webb Space Telescope and see if any wide binary star systems exist in dwarf galaxies, which are expected to be dense in dark matter. If they’re found, then their very existence would make the cold dark matter theory more unlikely.

“Nobody has been able to say one way or another whether wide binaries exist in the dwarf galaxies for lack of adequate instrumentation,” Walker said. “But it’s an area the James Webb Space Telescope now will allow us to explore.”

Carnegie Mellon’s leadership in both projects comes as the university embarks on an innovative future of science initiative. The initiative will revolutionize science by leveraging the university’s strengths in foundational sciences, artificial intelligence, robotics, engineering and data analytics.