Parenting a 3-Year-Old Robot

CMU, Meta AI researchers develop robotic learning agent able to master multiple skills

Media Inquiries

Humans are social creatures and learn from each other, even from a young age. Infants keenly observe their parents, siblings or caregivers. They watch, imitate and replay what they see to learn skills and behaviors.

The way babies learn and explore their surroundings inspired researchers at Carnegie Mellon University and Meta AI to develop a new way to teach robots how to simultaneously learn multiple skills and leverage them to tackle unseen, everyday tasks. The researchers set out to develop a robotic AI agent with manipulation abilities equivalent to a 3-year-old child.

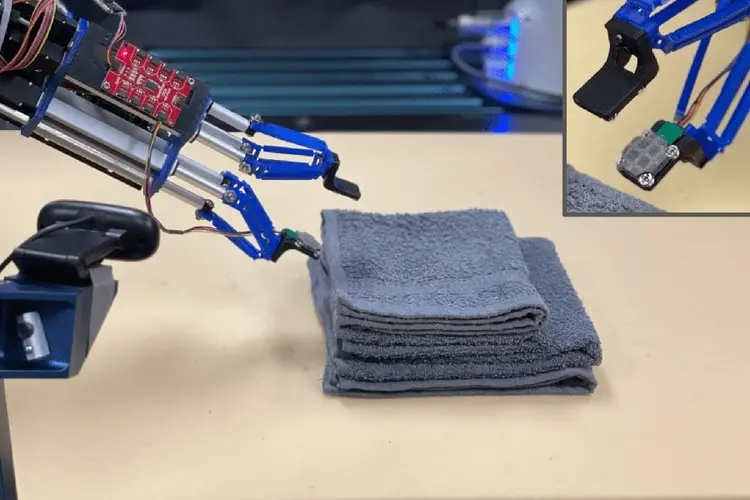

The team has announced RoboAgent, an artificial intelligence agent that leverages passive observations and active learning to enable a robot to acquire manipulation abilities on par with a toddler.

“RoboAgent is a critical milestone toward general robotic agents that are efficient learners, effective in novel situations and capable of expanding their behaviors over time,” said Vikash Kumar, adjunct faculty in the School of Computer Science(opens in new window)’s Robotics Institute(opens in new window). “Current robots are highly specialized and trained for individual tasks in isolation. In contrast, we set out to create a single artificial intelligence agent capable of exhibiting a wide range of skills in unseen scenarios. RoboAgent learns like human babies — leveraging a combination of abundant passive observations and limited active play.”

RoboAgent can complete 12 manipulation skills across differing scenes. This research points toward a robotic learning platform adaptable to changing environments. Unlike past research, the team demonstrated their work in real environments — not simulation — and did so with far less data than previous projects.

“RoboAgents are capable of much richer complexity of skills than what others have achieved,” said Abhinav Gupta(opens in new window), an associate professor in the Robotics Institute. “We’ve shown a greater diversity of skills than anything ever achieved by a single real-world robotic agent with efficiency and a scale of generalization to unseen scenarios that is unique.”

The team’s agent learns through a combination of self-experiences and passive observations contained in internet data. As a parent would guide their child, researchers teleoperated the robot through tasks to provide it with useful self-experiences.

“The effectiveness and efficiency of our approach stem from our novel policy architecture that allows our agents to reason even with limited experiences,” said Homanga Bharadwaj, a Ph.D. student in robotics. “RoboAgent acts in response to specified text/visual goals by predicting and aggregating decisions in terms of temporal chunks of movements instead of commonly used per-timestep actions.”

Robots primarily learn from their own experiences, not from what happens passively around them. This inherent blindness to what goes on in their environment fundamentally limits both the diversity of experiences robots are exposed to and their abilities to adapt to new situations. To overcome these limitations RoboAgent learns from videos on the internet — akin to how babies acquire knowledge and behaviors by passively observing their surroundings.

“RoboAgent leverages the information contained in these videos to learn priors about how humans interact with objects and use various skills to successfully complete tasks,” said Mohit Sharma, a Ph.D. student in robotics. “Additionally, observing similar skills in multiple scenarios allows it to learn what is and isn't necessary to complete a task. It leverages these lessons when presented with unknown tasks or unseen environments.”

“An agent capable of this sort of learning moves us closer to a general robot that can complete a variety of tasks in diverse unseen settings and continually evolve as it gathers more experiences,” said Shubham Tulsiani(opens in new window), an assistant professor in the Robotics Institute. “RoboAgent can quickly train a robot using limited in-domain data while relying primarily on abundantly available free data from the internet to learn a variety of tasks. This could make robots more useful in unstructured settings like homes, hospitals and other public spaces.”

The team’s trained models, codebase, hardware drivers and — most notably — the entire data set collected on this research are open-source. RoboSet is the largest publicly available robotics data set on commodity hardware. The team hopes this will enable others to reuse, adapt and pass it forward, leading to a truly foundational general robotic agent over time.

The research team includes Kumar, Tulsiani, Gupta, Bharadwaj, Sharma and Jay Vakil from Meta AI. More information about RoboAgent and RoboSet is available on the project’s website.(opens in new window)