Experts Study Marine Mammals To Learn About Human Hearing

A CMU researcher leads work on auditory attention

Media Inquiries

Many hearing loss patients have the same complaint: They have trouble following conversations in a noisy space. Carnegie Mellon University’s Barbara Shinn-Cunningham(opens in new window) has spent her career conducting research to better understand this problem and how it affects people at cocktail parties, coffee shops and grocery stores.

Now, along with a team of researchers from six universities, Shinn-Cunningham, the director of CMU’s Neuroscience Institute(opens in new window) (NI) and the George A. and Helen Dunham Cowan Professor of Auditory Neuroscience, is looking for answers in an unexpected place. The researchers will conduct noninvasive experiments on free-swimming dolphins and sea lions.

Dolphins and sea lions hear differently than humans, but the way their brains make sense of sound could help Shinn-Cunningham and her team better understand the cognitive and neural mechanisms of sound processing. The researchers have received a Multidisciplinary University Research Initiative(opens in new window) grant from the Department of Defense to investigate how animals understand and respond appropriately to the cacophony reaching their ears.

The team will conduct several different experiments.

Working with a world expert on dolphin behavior, they will train dolphins to identify targets, shapes such as a spheroid or a cross, and then ask the dolphin to identify the target from among similar, distracting shapes using their special auditory sense: echolocation. By engineering the distractor shapes to be more or less like the target, the experimenters will determine what sound features the dolphins use to "see" with sound.

In a different series of studies, the team will explore whether dolphins, like humans, learn to anticipate repeating patterns in sound, a critical step in making sense of complicated sounds in noisy settings. In this work, they will use electroencephalography (a technology used routinely with humans in laboratories and clinics) to measure neural responses from the dolphins to see if the brain shows a surprise signature when the ongoing sound pattern changes.

The Neuroscience Institute

CMU's Neuroscience Institute brings together faculty and students from across the university to conduct multidisciplinary work to advance the state of brain science.

Alexander Ruesch, a postdoctoral researcher in Shinn-Cunningham's lab, poses with a dolphin in Oahu, Hawaii. The free-swimming dolphins can choose if and when to participate in the experiments at any time, a benefit that gives the researchers greater insight into their behavior and health.

"Learning how the brains of our marine cousins process complex acoustic scenes, and how that is similar or different to auditory processing in humans, can give us a deeper understanding of human hearing."

— Barbara Shinn-Cunningham

Later, the scientists will use sea lions to investigate whether, as in humans, different brain networks calculate the location of a sound versus the meaning of a sound. They will train the animals to respond to where a sound comes from (left or right) or what the sound is (e.g., the call of another sea lion or the sound of the trainer’s voice). Using near-infrared spectroscopy, the same technology often used in doctor’s offices on human fingertips to determine oxygen levels, the researchers will examine whether the brain regions engaged when listening to the same sounds change depending on what the sea lion is calculating.

Shinn-Cunningham said that the research could point to new approaches to developing hearing aids and other assistive listening devices, along with other benefits.

"Developing noninvasive ways of monitoring brain function in these marine mammals, including when in the wild, can give us insight into how these animals navigate in and make sense of their undersea world, which is important to conservation efforts," she said. "Additionally, learning how the brains of our marine cousins process complex acoustic scenes, and how that is similar or different to auditory processing in humans, can give us a deeper understanding of human hearing. I appreciate that we have access to phenomenal, accredited research facilities for this work."

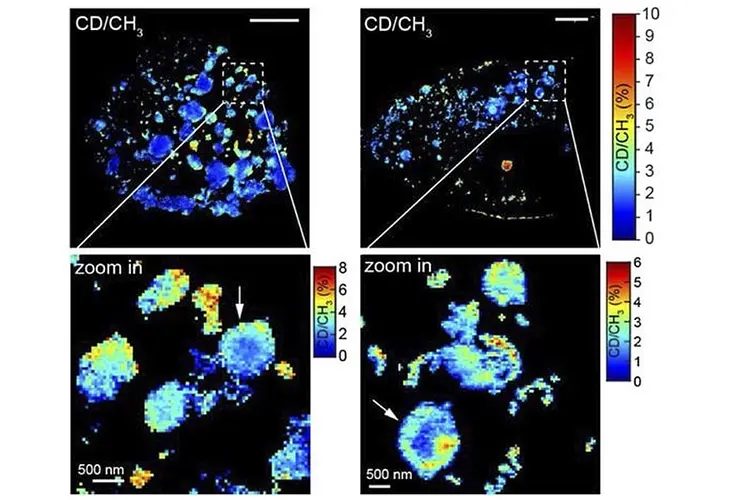

To develop those methods, the research team turned to Jana Kainerstorfer(opens in new window), an associate professor of biomedical engineering and an expert in multimodal imaging techniques. She and her team are currently investigating ways to apply the technologies, which are commonly used in humans, to sea lions.

"By using inherently noninvasive methods, we are able to learn something about the animals’ behavior and brain processing without interfering with their behavior," Kainerstorfer said. "It’s incredible to have people from such diverse backgrounds come together and work on a common problem."

Shinn-Cunningham is best known for her work on the phenomenon called the cocktail party effect, which describes the brain’s ability to focus on a single conversation, even in a noisy room.

"In a normal social setting, like a noisy cocktail party, our ears receive multiple, overlapping conversations coming from all directions. It is chaos," Shinn-Cunningham said. "But we're really good at focusing on one conversation and ignoring other distracting stuff. To do that, the brain has to figure out what sound comes from the juicy, interesting conversation, and then throw out everything else."

The cocktail party effect does not only occur on land. When dolphins and other animals use echolocation, emitting high-pitched clicks that bounce off objects in the water to find nearby predators and prey, they face a similar problem.

"Echolocating animals, like dolphins, have streams of information coming back at them from all directions. They might detect a whale, the seafloor and a fast-moving tiger shark all at once," she said. "To respond in time, they have to separate and organize the information quickly, focusing on the important echoes and suppressing the rest."

Researchers have been studying echolocation for decades, even imitating it with sonar, short for sound navigation and ranging. Shinn-Cunningham said even the best human sonar operator is not a match for a dolphin.

"We have sonar that has the same technical capacity as a dolphin, but sonar operators cannot search as effectively or as quickly. Humans use sonar like a lawn mower, systematically scanning every inch. But they do not prioritize information based on what they have already seen," she said. "We want to better understand how dolphins naturally organize and prioritize the complex information streams that they're getting back."

The researchers believe that a key limitation of past studies on echolocation is that they ignored the cognitive processes that allow animals to learn and categorize sounds. In the example above, the dolphin simultaneously detected the whale, seafloor and shark. But they likely were able to identify each object quickly because they had encountered them before, learned from their experiences, and knew how to group together echoes from each, forming an internal representation of each "object." That prior knowledge probably also automatically made them focus on and react to the tiger shark quickly, because they knew it was important.

"This is something mammals, including humans, do every day. For example, a human listener is easily able to recognize their own name even when it is spoken by an unfamiliar voice or by someone with an accent," Shinn-Cunningham said. "This could be the key to helping us understand how this works."

Additional team members include Alexander Pei, a doctoral student in CMU’s electrical and computer engineering; Alexander Ruesch, a postdoctoral NI research associate; Matt Schalles, a special faculty-researcher in NI; Peter Tyack, an emeritus research scholar at Woods Hole Oceanographic Institution; John Buck a professor at the University of Massachusetts Dartmouth, Heidi Harley, a professor at the New College of Florida; Wu-Jung Lee, a senior oceanographer at the University of Washington Applied Physics Lab; and Bogdan Popa and Alex Shorter, both assistant professors at the University of Michigan.