The Video Event Reconstruction and Analysis (VERA) System — Shooter Localization from Social Media Videos

Enabled by established machine learning techniques and physics models, the VERA system localizes the shooter location based on only a couple of user-generated videos that capture the gunshot sound. A collaborative project by CHRS, CMU’s Language Technologies Institute, and SITU Research, VERA was released in 2019 as an open-source tool, free-to-use for the general public.

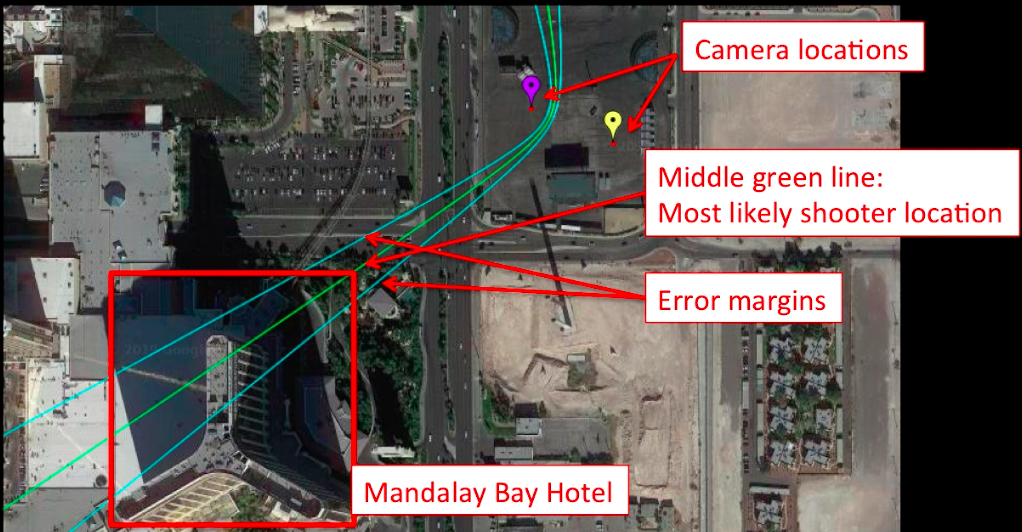

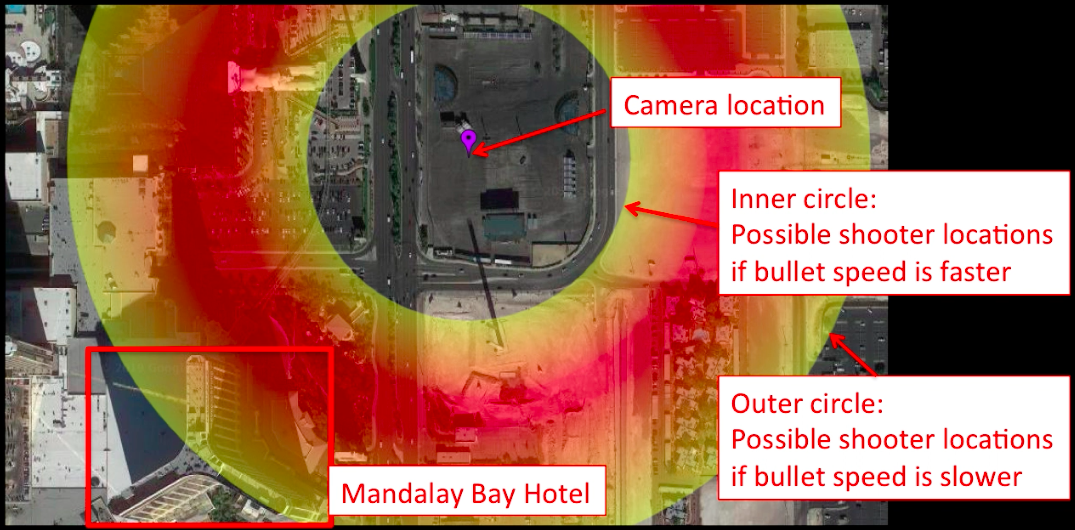

In the following photos, VERA is tested using smartphone video footage captured during the first minute of the 2017 mass shooting in Las Vegas, Nevada. The system is able to accurately estimate that the shooter was located in the north wing of the Mandalay Bay hotel, even with a margin of error that still pointed to the hotel as being the probable location.

Technical Details

This new tool brings together a variety of capabilities we have developed over the past few years (including video synchronization and geolocation to order unstructured videos lacking metadata over time and space, and sound recognition algorithms) to enable the reconstruction and analysis of events captured on video.

All of the components of VERA run through a web interface that enables human-in-the-loop verification to ensure accurate estimations.

We are open source!

All relevant source code, including the web interface and machine learning models, is freely available on Github. We hope that researchers and software developers will be inspired to improve and expand this system moving forward to better meet the needs of human rights and public safety.