Amateur Drone Videos Could Aid in Natural Disaster Damage Assessment

It wasn't long after Hurricane Laura hit the Gulf Coast Thursday that people began flying drones to record the damage and posting videos on social media. Those videos are a precious resource, say researchers at Carnegie Mellon University, who are working on ways to use them for rapid damage assessment.

By using artificial intelligence, the researchers are developing a system that can automatically identify buildings and make an initial determination of whether they are damaged and how serious that damage might be.

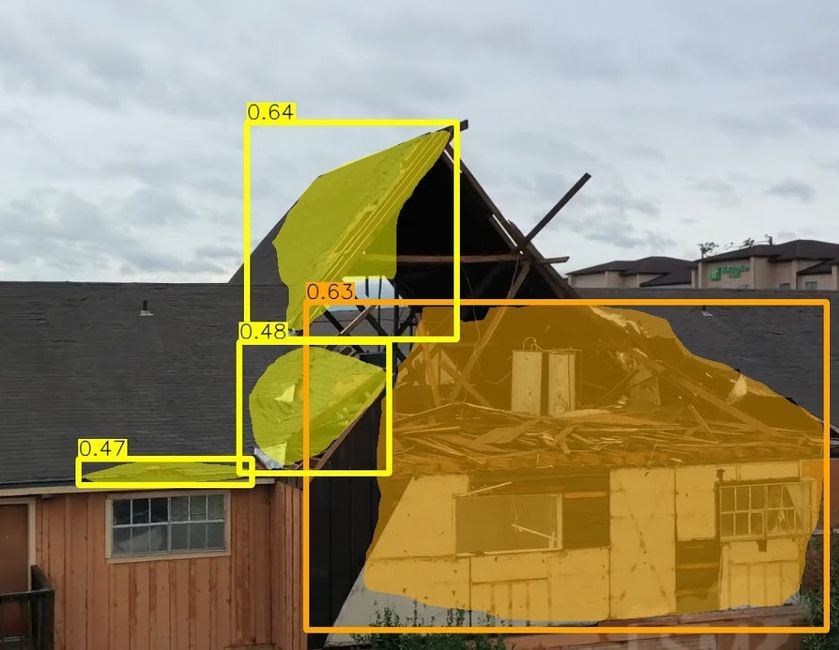

A team of CMU researchers are using AI to develop a system that automatically identifies buildings in drone footage of natural disasters and determines if they're damaged. The system can identify if the damage is slight (yellow) or serious (orange) or if the building has been destroyed.

"Current damage assessments are mostly based on individuals detecting and documenting damage to a building," said Junwei Liang, a Ph.D. student in CMU's Language Technologies Institute (LTI). "That can be slow, expensive and labor-intensive work."

Satellite imagery doesn't provide enough detail and shows damage from only a single viewpoint — vertical. Drones, however, can gather close-up information from a number of angles and viewpoints. It's possible, of course, for first responders to fly drones for damage assessment, but drones are now widely available among residents and routinely flown after natural disasters.

"The number of drone videos available on social media soon after a disaster means they can be a valuable resource for doing timely damage assessments," Liang said.

Xiaoyu Zhu, a master's student in AI and Innovation in the LTI, said the initial system can overlay masks on parts of the buildings in the video that appear damaged and determine if the damage is slight or serious, or if the building has been destroyed.

The team will present their findings at the Winter Conference on Applications of Computer Vision (WACV 2021), which will be held virtually next year.

The researchers, led by Alexander Hauptmann, an LTI research professor, downloaded drone videos of hurricane and tornado damage in Florida, Missouri, Illinois, Texas, Alabama and North Carolina. They then annotated the videos to identify building damage and assess the severity of the damage.

The resulting dataset — the first that used drone videos to assess building damage from natural disasters — was used to train the AI system, called MSNet, to recognize building damage. The dataset is available for use by other research groups via Github.

The videos don't include GPS coordinates — yet — but the researchers are working on a geolocation scheme that would enable users to quickly identify where the damaged buildings are, Liang said. This would require training the system using images from Google Streetview. MSNet could then match the location cues learned from Streetview to features in the video.

The National Institute of Standards and Technology sponsored this research.