CMU Makes Facial Image Analysis Software Available to Researchers

Fast, Powerful Software Is Efficient Enough To Run on Smartphones

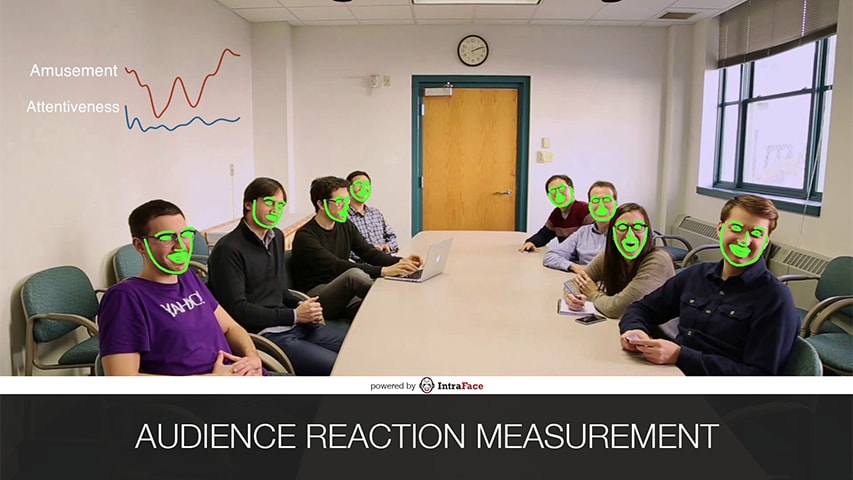

Byron Spice / 412-268-9068 / bspice@cs.cmu.edu CMU's advanced software for tracking facial features can measure audience reaction in real-time.

CMU's advanced software for tracking facial features can measure audience reaction in real-time.

Carnegie Mellon University’s Human Sensing Laboratory will celebrate the new year by making available to fellow researchers its advanced software for tracking facial features and recognizing emotions, filling a gap that has slowed development for real-time facial image analysis applications.

Automated facial analysis is at the heart of a host of potential applications, from monitoring the emotional state of patients to detecting whether a public speaker is losing an audience’s attention. Fernando De la Torre, associate research professor in the Robotics Institute, said releasing the latest version of the software, called IntraFace, will help expand those applications by giving researchers access to its state-of-the-art capabilities.

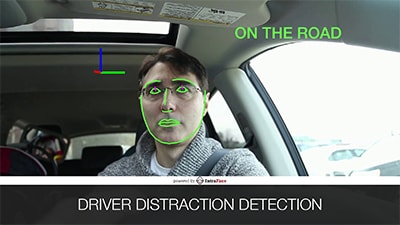

An app created by CMU researchers detects when a driver becomes distracted behind the wheel.

An app created by CMU researchers detects when a driver becomes distracted behind the wheel.

“IntraFace provides a breakthrough in facial feature tracking that simplifies the problem of facial image analysis, working rapidly, accurately and with such efficiency that it can run on most smartphones,” De la Torre said. “Now it’s time to develop new applications for this technology. We have a few of our own, but we believe there are lots of people who may have even better ideas once they get their hands on it.”

Researchers at Duke University, for instance, already have incorporated IntraFace into a research app that will test the reliability of facial expression analysis as a screening tool for autism.

The new software will be available in February at the Human Sensing Lab website, and released as a package that makes it easier to use. In the meantime, free demonstration apps, which show how IntraFace can identify facial features and detect emotions, can be downloaded from the lab site or from Apple’s App Store for iPhones and from Google Play for Android phones. IntraFace’s capabilities are also demonstrated in the video below.

Automated facial expression analysis has been a long-standing goal of computer vision researchers and tremendous advances have occurred over the last 20 years, De la Torre said. Commercial software and analysis services are now available but often can be difficult to use. They also are computationally intensive, and their performance can vary from one individual to another.IntraFace is the result of a decade of work by De la Torre and his colleagues, including Jeffrey Cohn, a professor of psychology and psychiatry at the University of Pittsburgh and an adjunct professor in CMU’s Robotics Institute.

To increase its efficiency and help it work reliably with most faces, the researchers used machine learning techniques to train the software to recognize and track facial features. The researchers then created an algorithm that can take this generalized understanding of the face and personalize it for an individual, enabling expression analysis.

The result is that IntraFace is both accurate and fast. It occupies less computer memory than other methods and requires less power to run, making it suitable for use on a wide range of platforms, including smartphones and embedded systems.The potential applications are many, including distracted or drowsy driver detection, automated analysis of marketing focus groups, animation of avatars in multi-player video games, human-robot interaction, and monitoring or detection of depression, anxiety and other disorders.

Over the last two years, as De la Torre and his team have begun presenting their work at scientific conferences, the software has been downloaded thousands of times and elements of the patent-pending technology have been incorporated into some commercial apps. The technology can be used for research purposes for free; licensing for commercial use is available.