The Behrmann Lab has moved to the University of Pittsburgh!

Find us at: https://live-behrmannlab-pitt.pantheonsite.io/

Dr. Marlene Behrmann is a Professor of Psychology at Carnegie Mellon University, whose research specializes in the cognitive basis of visual perception, with a specific focus on object recognition. Widely considered to be a trailblazer and a worldwide leader in the field of visual cognition, she was inducted into the National Academy of Sciences in 2015 and into the American Academy of Arts and Sciences in 2019.

Below are some recent publications from the lab:

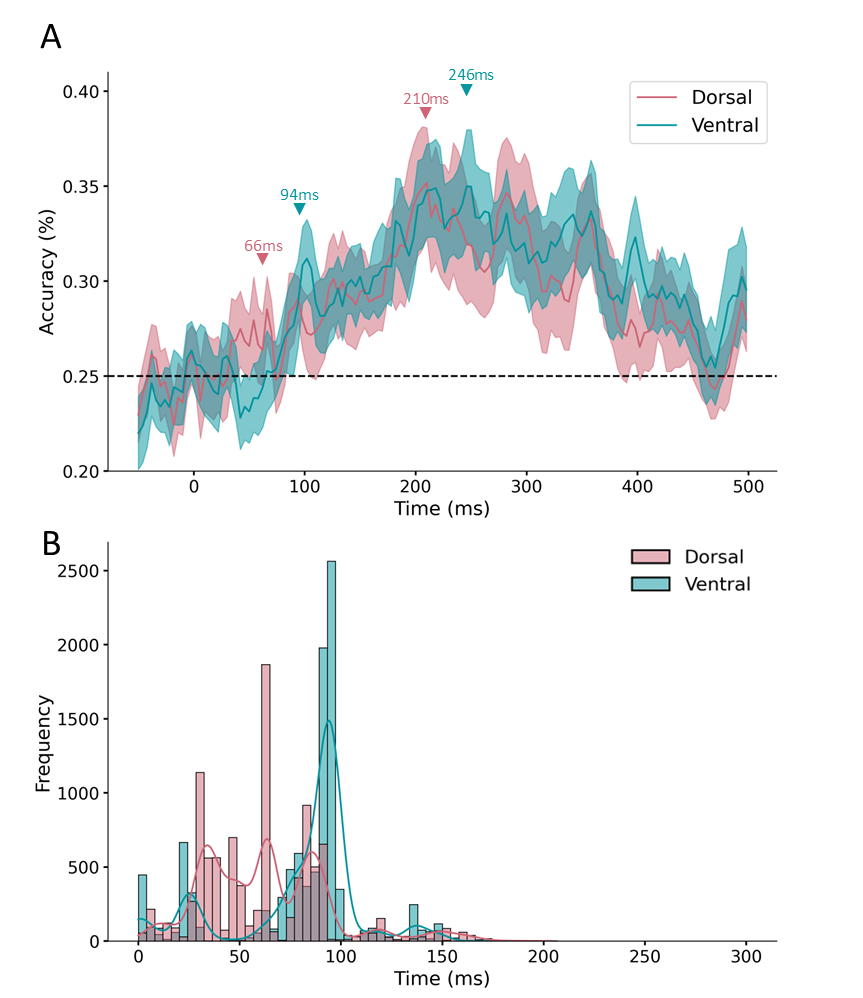

High-density EEG decoding analyses. (A) Time course of category decoding for (red) dorsal and (blue) ventral channels. Classification accuracy is plotted along the y-axis as a function of time (ms). Data are plotted from the pre- to post-stimulus period (−50 to 500 ms). Shaded regions indicate the standard error of the mean (SE). (B) A histogram illustrating the earliest onset of above chance classification for 10 000 resamples for (red) dorsal and (blue) ventral channels. The y-axis illustrates proportion with which each time point exhibited above chance classification accuracy. Data are plotted

for the stimulus period (0–300 ms).

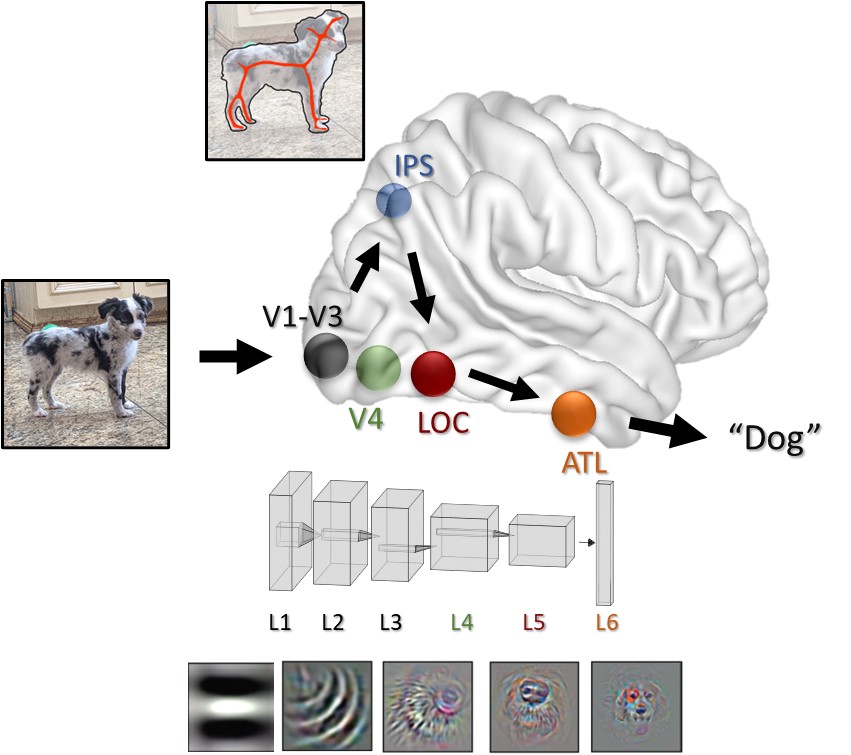

An expanded brain network for object recognition. In this schematic depiction of the visual system, the ventral pathway (V1 to ATL) acts much like a DNN (bottom) – extracting increasingly complex local object features, but not a complete shape. Instead, structural information describing the global shape of an object, but not its individual features (top; depicted as a red skeleton), may be computed in dorsal visual pathway regions such as IPS. This information is then sent to the ventral pathway to form a complete object representation.

We strive to foster an inclusive and welcoming environment, where everyone feels respected and valued. I encourage individuals from under-represented minority groups, who may be interested in short-term projects, PhD programs, or postdoctoral training to reach out to me by email (behrmann [at] cmu [dot] edu) to discuss opportunities.

The Psychology Department’s full Statement of Community Standards can be found here.