Hiding the Devilishness of Detail

Many years ago, the human factors department at Bell Labs asked a group of consumers to draw a picture of how they thought the telephone network connected two phones together after they dialed a phone number.  The vast majority drew something like the picture at right. We, who knew in detail how the network actually worked, laughed at their naivete. Around the same time, the ARPANET creators envisioned a “universal resource locator” (URL) that would enable a user to locate any other user or computer in the world with a simple text string. These two examples illustrate a key principle: when dealing with unbounded complexity that you can’t possibly understand, a good abstraction can be the best substitute for deep understanding. The simple diagram abstracted the thousands of complex telephone switches and transmission lines that blanket the world. The URL abstracted the complex network of servers, switches, routers, load balancers, mirror sites, and domain name servers that make up the internet and cloud computing today.

The vast majority drew something like the picture at right. We, who knew in detail how the network actually worked, laughed at their naivete. Around the same time, the ARPANET creators envisioned a “universal resource locator” (URL) that would enable a user to locate any other user or computer in the world with a simple text string. These two examples illustrate a key principle: when dealing with unbounded complexity that you can’t possibly understand, a good abstraction can be the best substitute for deep understanding. The simple diagram abstracted the thousands of complex telephone switches and transmission lines that blanket the world. The URL abstracted the complex network of servers, switches, routers, load balancers, mirror sites, and domain name servers that make up the internet and cloud computing today.

As edge computing emerges, it introduces new complexity. Now, a user’s proximity to edge computing resources matter. And, edge computing economics and market structure mean that the edge computing provider and the resources it provides can be quite variable over time and space. For the edge-native application provider, finding the “right” edge computing node or cloudlet to serve a specific user for a specific session can be non-trivial. The application has technical requirements for latency, bandwidth, compute performance, software compatibility, data storage, and other attributes. The ability of a given cloudlet to satisfy these requirements is both static (e.g., what type of GPU is in the server?) and dynamic (e.g., how many other users are on it right now?). And, the cloudlet’s static and dynamic capabilities might be intentionally hidden from the application provider for business, regulatory, or technical reasons.

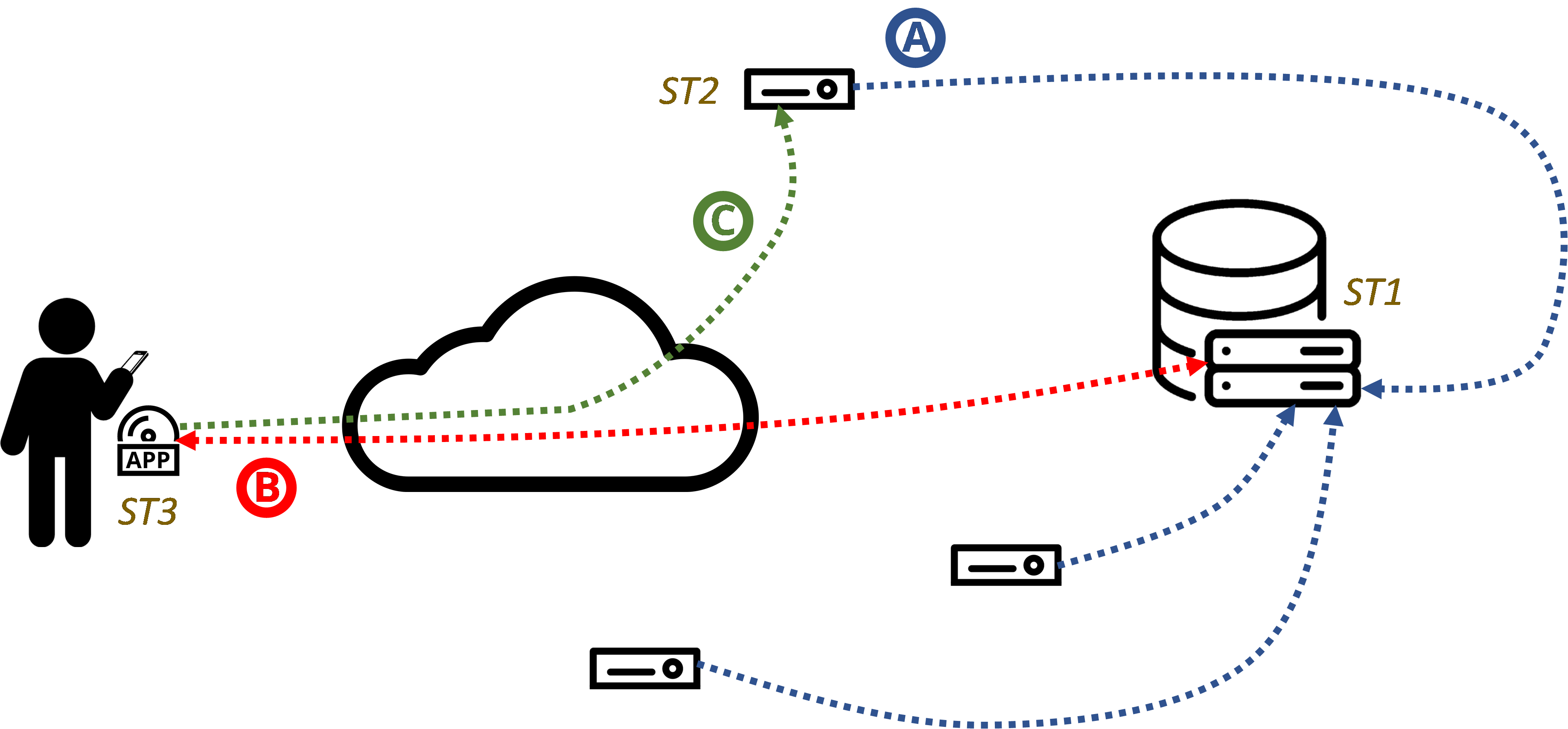

Here at the Living Edge Lab, we believe that this combination of complexity and resource opacity calls for a new abstraction at the boundary between edge-native application providers and edge computing infrastructure providers. This abstraction must easily and simply provide the app provider with a small set of candidate cloudlet endpoints without the app provider needing any knowledge of what and where the cloudlets are. Our Sinfonia project, led by Jan Harkes in collaboration with Meta and ARM, focuses on the development and evaluation of an infrastructure-facing component and an application-facing component. The Sinfonia architecture is shown in Figure 1.

- The infrastructure-facing component enables cloudlet providers at Sinfonia Tier 2 (ST2) to register their available resources with a Sinfonia broker at Sinfonia Tier 1 (ST1) and to update the current resource state on an on-going basis.

- The Sinfonia broker serves as a root of orchestration and, when requested by the application-facing component at Sinfonia Tier 3 (ST3), is responsible for identifying and delivering a shortlist of candidate cloudlets from the registry to the application. The logic for creating the shortlist can be as simple as the closest cloudlets to the user’s location or as complicated as the broker and app-provider require.

- After receiving the shortlist, the application establishes a user session to the desired cloudlet from the shortlist. The logic for selecting from the shortlist can be as simple as choosing the first in the list or a sophisticated as the application provider requires.

We have developed an initial implementation of Sinfonia which is available here and our paper describing it is available here. We are presenting Sinfonia at the virtual ARM/DevSummit 2022. Sinfonia is still in an early stage, but we see opportunity for it to become the defacto approach for edge-application orchestration in the future.

For more information,

- Satyanarayanan, Mahadev, Jan Harkes, Jim Blakley, Marc Meunier, Govindarajan Mohandoss, Kiel Friedt, Arun Thulasi, Pranav Saxena, and Brian Barritt. "Sinfonia: Cross-Tier Orchestration for Edge-Native Applications." Frontiers in the Internet of Things (2022): 5

- Sinfonia@Github code repository

- Sinfonia: Cross-Tier Orchestration for Edge-Native Applications, ARM/DevSummit 2022.