Opening Edge Computing’s Black Box

In the early days of the Living Edge Lab, Wi-Fi was our go-to access network for edge computing research. When it was good, it was very good but when it was bad, it was very bad. Under ideal conditions, Wi-Fi has good throughput and round-trip latency under 5ms. But, when congestion and interference occur, any predictability goes out the window. There’s no way to control all those wild Wi-Fi hotspots around campus. Also, Wi-Fi isn’t particularly good at mobility or coverage in large scale outdoor areas. We began to use commercial 4G LTE mobile networks in addition to Wi-Fi for our research work. That got us mobility and widespread outdoor coverage but round-trip latencies in the 50 to 200ms range. Commercial mobile networks are also subject to congestion, often have geographically diverse interconnect points that add latency, and are opaque to a researcher trying to understand what’s going on inside. Our need for low latency, predictability, mobility, and transparency led us to build the private CBRS 4G LTE mobile network that I talked about in my blog, “The Quest for Lower Latency”.

The network is giving us a predictable 30 to 35ms round-trip latency and good coverage in the outdoor areas around campus. But we’re never satisfied – we want a mobile network with round-trip latencies less than 20ms. The lower we can get, the more edge-native applications become feasible; for example, augmented reality apps need very low motion-to-photon latencies to give adequate user experience. In our first step toward continual improvement in round-trip latency, we launched a project to measure latency for each of the large network segments in the Living Edge Lab. We wanted to “pareto” the segments to understand where to focus our latency optimization efforts.

The network is giving us a predictable 30 to 35ms round-trip latency and good coverage in the outdoor areas around campus. But we’re never satisfied – we want a mobile network with round-trip latencies less than 20ms. The lower we can get, the more edge-native applications become feasible; for example, augmented reality apps need very low motion-to-photon latencies to give adequate user experience. In our first step toward continual improvement in round-trip latency, we launched a project to measure latency for each of the large network segments in the Living Edge Lab. We wanted to “pareto” the segments to understand where to focus our latency optimization efforts.

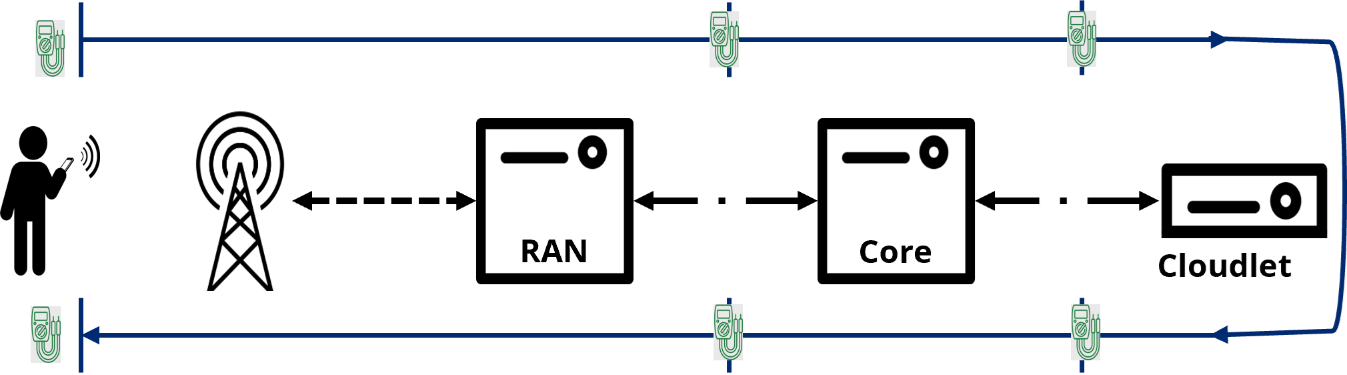

The Network Latency Segmentation Project, led by students Sophie Smith and Ishan Darwhekar, implemented network measurement probes at various points in the network – at the user equipment (UE), between the Radio Access Network (RAN) and the Evolved Packet Core (EPC) and the between the EPC and the Cloudlet. See Figure 1.

They developed a network synchronization method to enable correlation of packets generated by network traffic and a database and dashboard to monitor the latency at each segment in real time. They then ran a series of experiments using this framework. The project and results are presented in a new CMU Technical Report, “Segmenting Latency in a Private 4G LTE Network”.

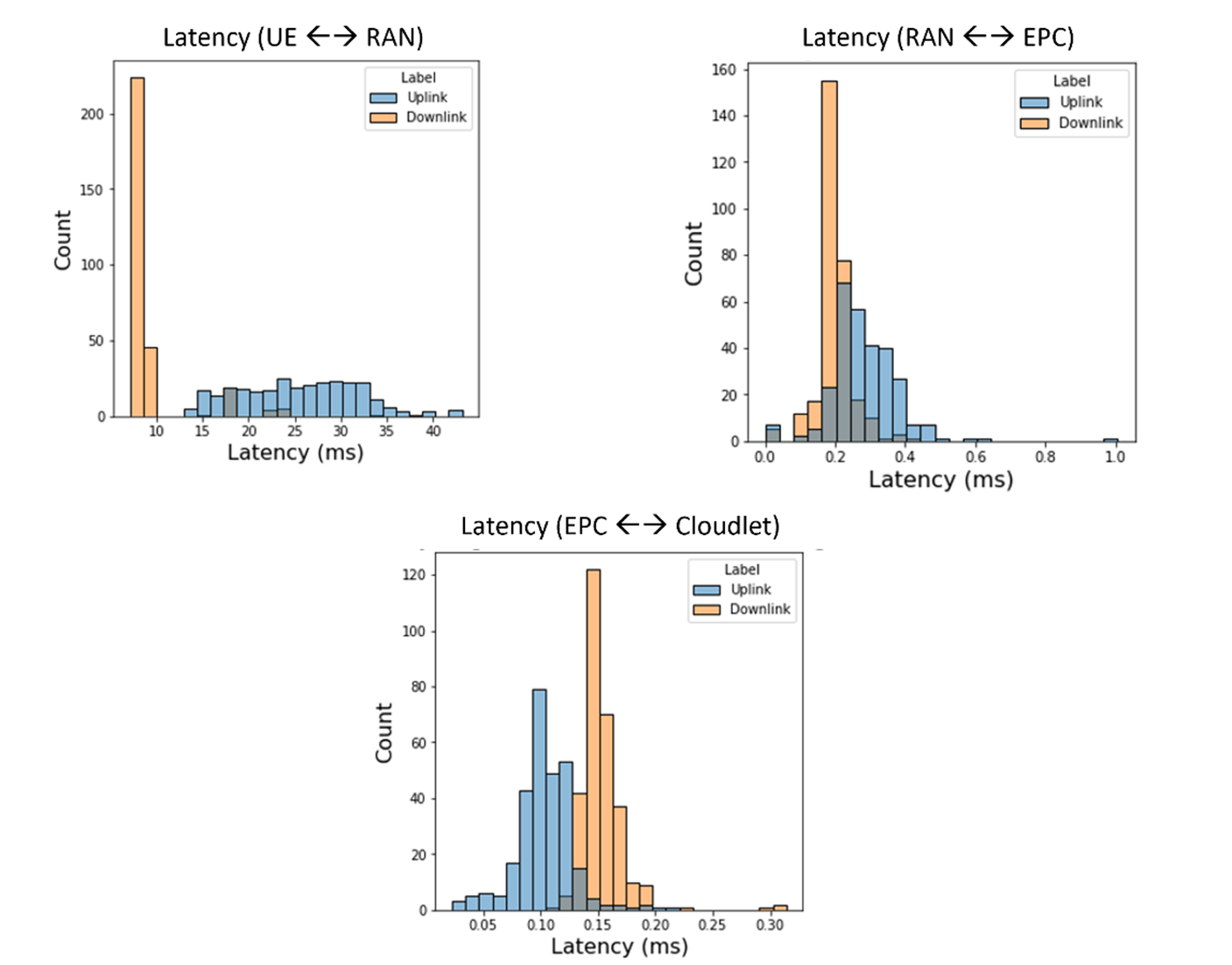

The most significant learning was that the UE-RAN uplink median latency was around 25ms while the RAN-UE downlink was around 8ms – almost the entire 35ms round-trip! The EPC and cloudlet (not including any application-specific processing) contribute less than 2ms. The other observation is that the latency variance (or jitter) in the uplink latency is substantial and accounts for most of the round-trip jitter. See Figure 2 for uplink and downlink latencies for each of the segments.

Figure 2 – Network Segmentation Results

This result points us to the 4G LTE uplink channel scheduling algorithms as the probable cause of the uplink issues. We’re beginning to look at ways we might optimize the UE-RAN uplink while we wait for 5G-readiness. We believe that 5G will make significant improvement, but we’d still like to see how well we can do on 4G.

The approaches we used in this project didn’t require any proprietary or encrypted access inside the RAN or Core. We tapped in at open points in the 4G LTE architecture. That means that other private network operators could apply the same techniques to get data for their networks. Look at the technical report, project presentation video, poster and opensource code for more details.